https://ift.tt/I4E13zL This article gives you 14 curated projects organized by difficulty, with free datasets, starter code, time estimates...

This article gives you 14 curated projects organized by difficulty, with free datasets, starter code, time estimates, and advice on turning each one into a real portfolio piece. Every project here teaches transferable skills and gives you something concrete to show hiring managers.

If you have ever closed a "best ML projects" list feeling more overwhelmed than when you opened it, you are in the right place. We have cut the list down to the projects worth your time — the ones that teach transferable skills and give you something concrete to show hiring managers.

What's in this guide

- What you need before starting an ML project

- Beginner machine learning projects

- Intermediate machine learning projects

- Advanced machine learning projects

- How to choose the right machine learning project for your goals

- How to find a project idea that is uniquely yours

- Presenting your projects so they get you interviews

- Frequently asked questions

What you need before starting an ML project

Before picking a project, a quick honest check: your results will frustrate you if you jump in missing foundational pieces.

For beginner projects, you need Python basics, pandas, NumPy, a working understanding of mean and variance, and the ability to load and clean a dataset. For intermediate projects, add scikit-learn familiarity, train/test splitting, and evaluation metrics like accuracy, RMSE, and F1.

If any of that feels shaky, Dataquest's Machine Learning in Python skill path builds every one of those skills from the ground up, with hands-on projects included.

Beginner machine learning projects

These five projects are the right starting point if you can load a CSV, write a loop, and follow along with a scikit-learn tutorial. Each one is self-contained, uses a free publicly available dataset, and teaches a core ML concept that comes up in real jobs.

| Project | Key Skill | Dataset | Time Estimate |

|---|---|---|---|

| Customer Churn Prediction | Classification | Kaggle (Telco) | 6–10 hrs |

| Heart Disease Prediction | KNN | UCI | 5–8 hrs |

| House Price Prediction | Linear Regression | Kaggle (Ames) | 6–10 hrs |

| Email Spam Classifier | NLP / Text Classification | UCI (SMS) | 5–8 hrs |

| Customer Segmentation | Clustering / PCA | Kaggle (Mall) | 5–8 hrs |

1. Customer Churn Prediction ● ○ ○

Predict which customers are likely to leave a subscription service before they do. This is one of the most common ML use cases in real businesses, which makes it immediately relevant in an interview.

Skills you'll practice: logistic regression · scikit-learn · pandas · imbalanced-learn

Dataset: Telco Customer Churn (Kaggle)

Take it further: Add a Streamlit dashboard showing which customers are highest risk. Dataquest's Streamlit with AI course walks you through building exactly that kind of interactive app.

Why employers care: Customer retention is one of the highest-value ML applications in business. Demonstrating you understand class imbalance and can frame results for a non-technical audience signals readiness.

Where to start: Dataquest's Introduction to Supervised ML in Python course covers the classification foundations you need.

2. Heart Disease Prediction ● ○ ○

Classify patients as high or low heart disease risk using anonymized medical data. The healthcare domain gives this project built-in seriousness, and precision/recall tradeoffs become very concrete when false negatives have real consequences.

Skills you'll practice: KNN · scikit-learn · pandas · confusion matrix · precision/recall

Dataset: UCI Heart Disease Dataset

Take it further: Compare KNN performance against logistic regression and random forest. A model comparison section makes a much stronger portfolio piece than a single model in isolation.

Why employers care: Healthcare ML roles, and any domain where false negatives carry serious consequences require candidates who understand evaluation beyond accuracy. Choosing precision and recall as your primary metrics, and explaining why, demonstrates exactly that kind of judgment.

Where to start: Dataquest's Predicting Heart Disease guided project walks you through the full workflow or for step-by-step tutorial follow along with the project walkthrough video.

3. House Price Prediction ● ○ ○

Predict home sale prices from features like square footage, location, and number of rooms. Linear regression on housing data is a classic for a reason. It teaches feature engineering, evaluation metrics, and how to explain a model's output in plain terms.

Skills you'll practice: linear regression · feature engineering · pandas · matplotlib · RMSE/MAE

Dataset: Ames Housing Dataset (Kaggle). Use the Ames Housing dataset, not the older Boston Housing dataset, which has been deprecated from scikit-learn due to ethical concerns with one of its features.

Take it further: Add polynomial features or experiment with Ridge and Lasso regularization to see how they affect predictions on the test set.

Why employers care: Feature engineering and regression evaluation are foundational skills in data science and analytics roles. The Ames dataset is rich enough to show real feature engineering decisions, which is what separates this project from a tutorial clone.

Where to start: Dataquest's Linear Regression Modeling in Python course or watch the project walkthrough video.

4. SMS Spam Classifier ● ○ ○

Classify SMS messages as spam or not spam based on their text content. This is a gentle introduction to NLP that teaches you to turn raw text into numbers without needing any deep learning background.

Skills you'll practice: TF-IDF · Naive Bayes · text preprocessing · scikit-learn · classification metrics

Dataset: SMS Spam Collection — UCI

Starter code:

import pandas as pd

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report

df = pd.read_csv("spam.csv", encoding="latin-1")[["v1", "v2"]]

df.columns = ["label", "text"]

df["label"] = (df["label"] == "spam").astype(int)

X_train, X_test, y_train, y_test = train_test_split(df["text"], df["label"], test_size=0.2)

vec = TfidfVectorizer(stop_words="english")

X_train_tfidf = vec.fit_transform(X_train)

model = MultinomialNB().fit(X_train_tfidf, y_train)

print(classification_report(y_test, model.predict(vec.transform(X_test))))Take it further: Compare Naive Bayes against logistic regression, and try bigrams alongside unigrams. Visualize the most predictive words in each class with a bar chart.

Why employers care: Text classification appears in everything from customer support automation to content moderation. Understanding TF-IDF and basic NLP pipelines is a genuine job skill

Where to start: The scikit-learn text data tutorial walks you through the full workflow using official documentation.

5. Customer Segmentation ● ○ ○

Group customers by purchasing behavior without any labeled data—your first unsupervised learning project. Understanding how to interpret clustering results in business terms is a skill that transfers well to analytics and data science roles.

Skills you'll practice: K-means · PCA · scikit-learn · matplotlib/seaborn

Dataset: Mall Customers (Kaggle) or Dataquest's credit card customer segmentation guided project.

Take it further: Use the elbow method to choose the optimal number of clusters, then visualize segment profiles with a labeled scatter plot. A visualization that tells a story about customer behavior is portfolio gold.

Why employers care:Unsupervised learning is underrepresented in most beginner portfolios, and the ability to translate cluster results into business language (think "high-value loyalists" or "at-risk bargain hunters") is exactly the kind of analytical communication that marketing and product teams hire for.

Where to start: Dataquest's Introduction to Unsupervised ML in Python course or watch the project walkthrough video.

Intermediate machine learning projects

These six projects introduce the complexity that separates classroom ML from real-world ML: messy data, multiple model comparison, NLP pipelines, imbalanced classes, and in one case, a full deployment layer. If you have completed two or three beginner projects and feel comfortable with scikit-learn, you are ready for these.

| Project | Key Skill | Key Challenge | Dataset | Time Estimate |

|---|---|---|---|---|

| Stack Overflow Salary Prediction | ML Pipelines / XGBoost | Messy survey data | Stack Overflow Annual Survey | 10–15 hrs |

| Credit Card Fraud Detection | Ensemble Methods | Imbalanced classes | Kaggle | 8–12 hrs |

| Sentiment Analysis with Deployment | NLP / Streamlit | Text preprocessing + deployment | Hugging Face | 10–15 hrs |

| Insurance Cost Forecasting | Multiple Regression | Interaction terms | Kaggle | 8–10 hrs |

| IPO Market Return Prediction | PyTorch | Neural network tuning | Provided in DQ project | 12–18 hrs |

| Predict The Stock Market Using Machine Learning | Random Forest / Backtesting | Temporal data leakage | yfinance (live) | 10–15 hrs |

6. Stack Overflow Developer Salary Prediction ● ● ○

Predict developer salaries using the Stack Overflow Annual Developer Survey—one of the richest, most realistic datasets available. The data is messy by design: missing values, inconsistent categorical responses, and hundreds of features to sift through. Building a clean scikit-learn pipeline that handles all of it is the whole point.

Skills you'll practice: scikit-learn pipelines · XGBoost · cross-validation · feature importance · categorical encoding

Dataset: Stack Overflow Annual Developer Survey (free, publicly released each year)

Take it further: Deploy your model as a Streamlit app where users enter their profile and see a salary estimate. A live app with a real-world dataset is a significantly stronger portfolio piece than a static notebook.

Why employers care: Scikit-learn pipelines and messy real-world data handling are now baseline expectations in ML engineering roles. A candidate who arrives knowing how to build a preprocessing pipeline, not just call

model.fit()on a clean CSV but one that signals they are production-aware, not just course-trained.

Where to start: Dataquest's Machine Learning Analysis of Stack Overflow Survey Data guided project is free and takes you through the full pipeline build.

7. Credit Card Fraud Detection ● ● ○

Classify fraudulent transactions in a dataset where fraud accounts for less than 0.2% of records. When your classes are this imbalanced, accuracy is a misleading metric — a model that predicts "not fraud" every single time scores 99.8% accuracy but catches nothing. Learning to navigate that tradeoff is a skill employers in finance and fintech specifically look for.

Skills you'll practice: SMOTE · ensemble methods · imbalanced-learn · precision/recall/AUC · threshold tuning

Dataset: Credit Card Fraud Detection (Kaggle)

Starter code:

from imblearn.over_sampling import SMOTE

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report, roc_auc_score

sm = SMOTE(random_state=42)

X_res, y_res = sm.fit_resample(X_train, y_train)

model = RandomForestClassifier(n_estimators=100, random_state=42).fit(X_res, y_res)

preds = model.predict(X_test)

print(classification_report(y_test, preds))

print(f"AUC-ROC: {roc_auc_score(y_test, model.predict_proba(X_test)[:,1]):.4f}")Take it further: Compare the effect of SMOTE oversampling vs. class-weight adjustment on recall. Document which performs better and why that kind of analysis is what interviewers want to see.

Why employers care: Class imbalance is a real problem in finance, healthcare, and fraud detection roles. Demonstrating you understand precision-recall tradeoffs (not just accuracy) signals production-level thinking.

Where to start: Kaggle's fraud detection starter notebooks give you a clean launchpad.

8. Sentiment Analysis Pipeline with Deployment ● ● ○

Build a complete NLP pipeline: take product reviews, preprocess the text, train a classifier, and deploy it as a working Streamlit app. The deployment step is what makes this intermediate rather than beginner, you are not just training a model, you are building something someone else can actually use.

Skills you'll practice: TF-IDF · scikit-learn or Hugging Face · Streamlit · model serialization

Dataset: Amazon Product Reviews — Hugging Face Datasets

Starter code:

import joblib

from sklearn.pipeline import Pipeline

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.linear_model import LogisticRegression

pipeline = Pipeline([

("tfidf", TfidfVectorizer(max_features=10000, ngram_range=(1,2))),

("clf", LogisticRegression(C=1.0, max_iter=500))

])

pipeline.fit(X_train, y_train)

joblib.dump(pipeline, "sentiment_model.pkl")

# Then load in a Streamlit app: model = joblib.load("sentiment_model.pkl")Take it further: Replace TF-IDF with a fine-tuned DistilBERT model. The accuracy jump will be significant, and having both versions lets you demonstrate trade-offs between simplicity and performance.

Why employers care: End-to-end ownership, from raw text to a deployed application — demonstrates software engineering awareness that purely analytical projects cannot.

Where to start: Hugging Face's sentiment analysis tutorial is a solid non-competing starting point.

9. Insurance Cost Forecasting ● ● ○

Predict individual medical insurance costs from demographic and lifestyle features like age, BMI, and smoking status. Multiple regression teaches you to think about how variables interact — a smoker's costs don't just add to their BMI costs, they multiply them — and how to communicate model results to a non-technical audience.

Skills you'll practice: multiple regression · interaction effects · residual analysis · matplotlib · model interpretation

Dataset: Medical Cost Personal Dataset (Kaggle) or Dataquest's predicting insurance costs guided project.

Take it further: Build a cost estimator Streamlit app where a user inputs their details and sees a premium estimate. Framing a regression model as a user-facing tool makes the project immediately understandable to any interviewer.

Why employers care: Model interpretation and communicating results to non-technical stakeholders are skills that separate analysts from ML practitioners. Employers in insurance, healthcare, and financial services specifically value candidates who can explain not just what a model predicts, but why a particular input drives the output in the direction it does.

Where to start: Dataquest's gradient descent and logistic regression courses in the Machine Learning in Python skill path build the regression foundation you need. Or, you can watch the predicting insurance cost project walkthrough video first.

10. IPO Market Return Prediction ● ● ○

Predict whether an IPO will be profitable on its listing date using a regularized PyTorch neural network. This is your introduction to deep learning — and the finance domain makes the stakes feel real. Regularization techniques like dropout and batch normalization become much less abstract when you can see exactly how they affect your model's behavior on held-out data.

Skills you'll practice: PyTorch · dropout regularization · batch normalization · financial data preprocessing

Dataset: Indian IPO market data (included in the guided project)

Take it further: Experiment with different regularization strategies like L2 weight decay, dropout rates, early stopping, and compare their effects on validation loss. A rigorous ablation study makes this a strong portfolio piece.

Why employers care: PyTorch fluency and hands-on experience with regularization are increasingly expected for ML engineering and research roles. A finance-domain deep learning project is also a rare combination, most PyTorch beginner projects use image or text data, so the domain specificity alone makes this stand out.

Where to start: Dataquest's Predicting Listing Gains in the Indian IPO Market Using PyTorch guided project walks you through the full deep learning workflow. The project walkthrough video uses TensorFlow rather than PyTorch—the same approach, different library, and is a useful preview if you want to see the overall structure before you start.

11. Stock Market Prediction with Backtesting ● ● ○

Predict S&P 500 price movement using historical data and a random forest model, then validate it properly using backtesting. Most beginner stock prediction projects look accurate but are completely wrong because they leak future data into training. This project teaches you to avoid that trap, which is exactly what makes it intermediate rather than beginner.

Skills you'll practice: random forest · time series data handling · backtesting · rolling averages · yfinance · scikit-learn

Dataset: Live S&P 500 data fetched directly via yfinance. No separate download needed.

Take it further: Add additional predictors like volume trends, sector correlations, or macroeconomic indicators, and compare their effect on backtested performance. Document which features actually move the needle and why.

Why employers care: Backtesting is a fundamental concept in quantitative finance and any time series ML role. Candidates who understand why naive train/test splits fail on temporal data, and can implement a proper walk-forward validation that stands out immediately in fintech and financial services interviews.

Where to start: Dataquest's Predict the Stock Market Using Machine Learning guided project (premium) walks through all 8 steps including backtesting and adding rolling predictors. This project walkthrough video is free if you want to preview the approach first.

Advanced machine learning projects that stand out on a resume

The most common advice in ML hiring is some version of "go beyond the Jupyter notebook." These three projects do that. They involve either production deployment, system design judgment, or an original problem you cared enough about to build from scratch.

| Project | Key Skill | Key Challenge | Time Estimate |

|---|---|---|---|

| RAG-Powered Document Q&A System | LangChain / Vector DBs / LLMs | System architecture + retrieval tuning | 20–40 hrs |

| End-to-End ML Pipeline with Deployment | FastAPI / Docker / MLflow | Production engineering | 20–35 hrs |

| Reinforcement Learning Agent | DQN / PPO / Gymnasium | Reward design + training stability | 25–40 hrs |

12. RAG-Powered Document Q&A System ● ● ●

Build a retrieval-augmented generation (RAG) system: upload any document, ask questions in plain English, get grounded answers. This means combining a vector database (FAISS or Chroma), an embedding model, and an LLM — either open-source via Hugging Face or through the OpenAI API — orchestrated with LangChain or LlamaIndex.

Skills you'll practice: LangChain / LlamaIndex · vector embeddings · FAISS / Chroma · Hugging Face or OpenAI API · Streamlit or Gradio

Dataset: Project Gutenberg, SEC EDGAR , arXiv.org, or any PDF corpus works company reports, research papers, government legislation.

Why it stands out: RAG is the dominant pattern for production LLM applications in 2025–2026. Building one demonstrates ML depth, software engineering judgment, and awareness of how AI is actually being deployed — not just trained.

Why employers care: Few candidates at any level have built a full RAG pipeline end-to-end. LangChain, vector databases, and LLM orchestration are appearing in ML engineering job descriptions across the industry, demonstrating hands-on experience with all three in a single project is a genuine differentiator.

Where to start: Dataquest's Building an AI Chatbot with Streamlit guided project, then the Generative AI Fundamentals skill track for the deeper RAG architecture.

13. End-to-End ML Pipeline with Deployment and Monitoring ● ● ●

Take a trained model — your churn predictor or fraud detector from the intermediate tier — and turn it into a production API: a FastAPI endpoint, a Docker container, and basic prediction drift monitoring using MLflow. This is the project that shows you can ship.

Skills you'll practice: FastAPI · Docker · MLflow · basic drift detection · GitHub Actions (optional)

Why it stands out: Hiring managers can see a trained model in ten seconds. A containerized API with monitoring shows you understand what happens after training — and that you have thought about what breaks in production.

Why employers care: Training a model is table stakes. Containerizing it, serving it via an API, and monitoring it over time is what ML engineers actually do at work. This project is the clearest possible signal that you can ship not just experiment, and that distinction is what separates ML engineers from ML students in most hiring decisions.

Where to start: The FastAPI official tutorial and MLflow quickstart are both free and non-competing starting points.

14. Reinforcement Learning Agent ● ● ●

Train an RL agent using DQN or PPO on an OpenAI Gym environment or a custom optimization problem. Reinforcement learning is rarely covered in project lists, yet it represents a fundamentally different ML paradigm — one that is increasingly relevant in robotics, logistics, and autonomous systems.

Skills you'll practice: Gymnasium · Stable Baselines3 · DQN / PPO · reward engineering · training stability

Where to start: Gymnasium documentation and Stable Baselines3 tutorials are both free.

Why it stands out: RL projects signal intellectual range. Most ML portfolios are entirely supervised learning. A working RL agent shows you can reason about problems with delayed feedback and no labeled training data — a genuinely different mode of thinking.

How to choose the right machine learning project

Not all projects serve the same purpose. Picking a project that matches your actual goal saves you weeks of frustration and produces a much stronger result.

If your goal is learning a new skill, pick projects that stretch your current knowledge by exactly one level. You want a challenge, not a crisis.

If your goal is building a portfolio for job applications, skip the toy datasets and anything you followed step-by-step from a tutorial.

If your goal is preparing for interviews, prioritize projects with genuine business context, ones where you can explain why the problem matters, not just how you solved it.

Three qualities consistently separate stand-out portfolio projects from forgettable ones:

- The project solves a real problem (not just a textbook exercise),

- It is end-to-end (data collection through deployment or presentation),

- It reflects genuine curiosity.

Industry professionals frequently estimate that 60–80% of ML work is data cleaning and preparation—not modeling. Projects that include messy, real-world data with real feature engineering impress employers far more than projects built on clean Kaggle CSVs with 100% complete rows.

How to find a machine learning project idea that is uniquely yours

The most impressive portfolio projects are not the ones on this list. They are the ones you invented yourself. This is the advice that appears most frequently in ML hiring discussions and is almost entirely absent from project guides — so we are giving it its own section.

A developer with no formal degree and no bootcamp certificate landed multiple ML interviews based on a cycling wind tunnel AI and a road surface classification system. Not neural network MNIST. Not a Kaggle competition. Original problems, collected data, and genuine curiosity. Hiring managers can feel the difference.

Here is a five-step framework for finding your own idea:

Step 1: List five things you are interested in or frustrated by. Cycling, cooking, fantasy football, your daily commute, local air quality, your dog's health, music discovery. Anything.

Step 2: For each, ask: what data exists? Strava exports, recipe databases, sports APIs, transit authority open data, Spotify API, weather stations, vet records. Most domains have more freely available data than people realize. Dataquest's free datasets guide is a good starting point.

Step 3: For each data source, ask: what prediction or classification would be useful? Predict ride performance from weather and elevation. Classify recipes by difficulty from ingredient lists. Predict fantasy football lineup scores. Predict bus delays from weather patterns.

Step 4: Pick the one that genuinely excites you. Passion is not a soft quality — it is what sustains you through three days of data cleaning before you have trained a single model. You can tell when a project was built with curiosity, and so can hiring managers.

Step 5: Scope it as an end-to-end project. Data collection, cleaning, exploratory analysis, modeling, evaluation, and a simple deployment or documentation layer. The scope does not need to be large. It needs to be complete.

The goal is a project that nobody else has. That specificity, more than your choice of algorithm, is what gets you remembered.

Presenting your projects so they actually get you interviews

Building a strong project is half the work. Presenting it is the other half, and this is where most people lose the interview before it starts.

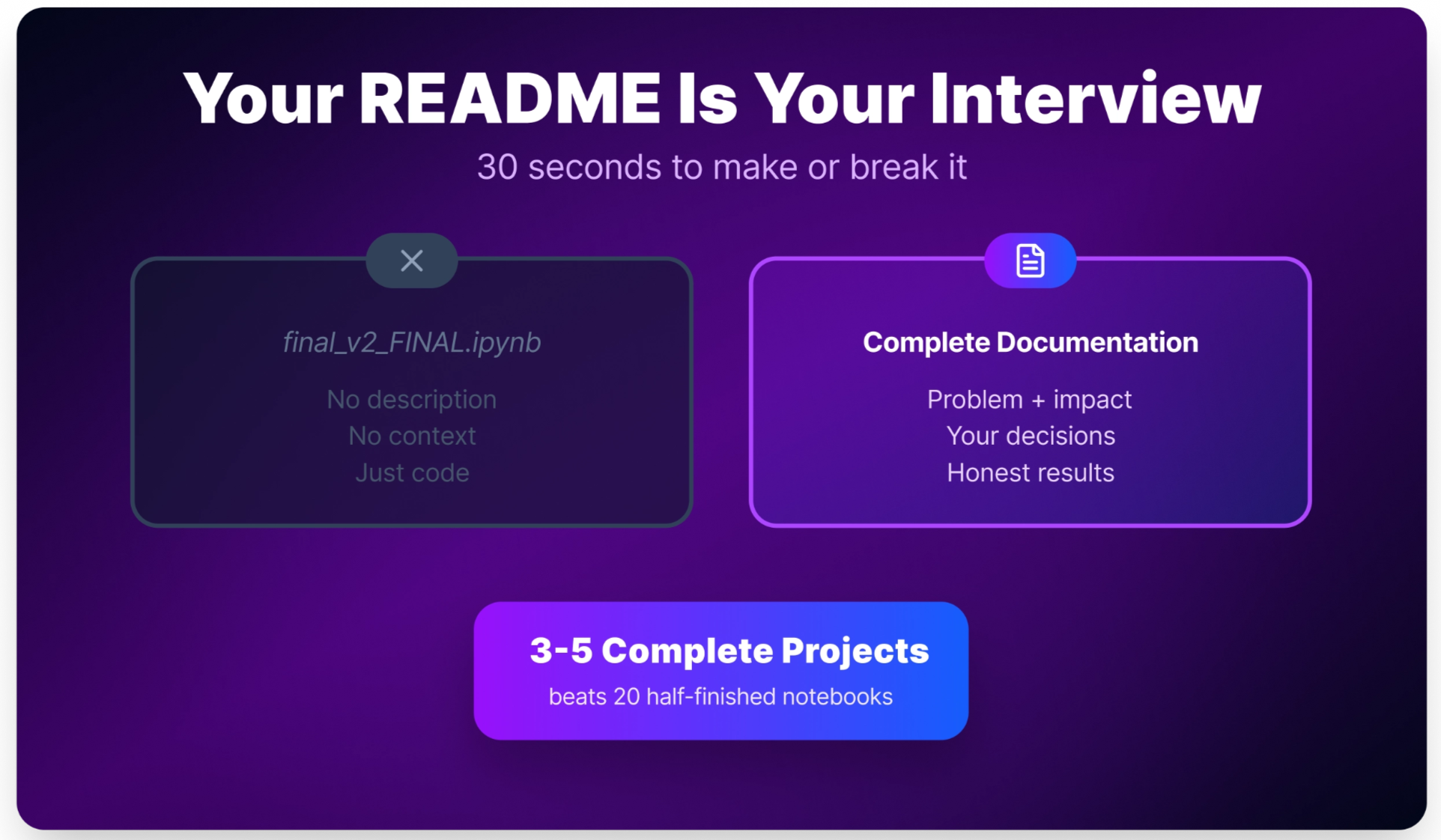

Your GitHub README is your first interview.

A recruiter spending 30 seconds on your project will decide whether to share it with an engineer based almost entirely on the README.

A strong README includes:

- The problem and why it matters (business context, not "this was a school assignment")

- Your approach and why you chose it

- Results with honest metrics

- How to run the code, and at least one visualization.

A weak README often stops at the code, without explaining the problem, the approach, or the results.

Structure your portfolio write-up in four parts.

- The problem and why it matters.

- Your approach and why you chose it over alternatives.

- Results with honest evaluation — what worked, what did not, and what the limitations are.

- What you would do differently.

The fourth part is the one that signals growth mindset and genuine engagement, and it is almost always missing.

On your resume, describe projects in the format that best highlights what matters most. A few options depending on what you want to emphasize:

- Built [project type] using [tools] to [solve problem or deliver outcome].

- Developed [project] that improved [metric] or demonstrated [specific capability].

- Created [project] focused on [business problem], using [methods/tools] and evaluated with [key metric].

- Deployed [model/application] with [stack], showing [production or engineering capability].

Quantify wherever the data supports it:

- Built a fraud detection model using scikit-learn and SMOTE, achieving 94% precision on a held-out test set.

- Deployed a sentiment analysis app with Streamlit and logistic regression, demonstrating end-to-end NLP workflow and model serving.

- Created a customer churn project focused on retention risk, using class imbalance handling and threshold tuning to improve recall.

Show hiring managers what they are actually evaluating in your project:

Did you identify the problem yourself, or just follow a tutorial? Can you explain your decisions and tradeoffs clearly? Is the code clean and documented? Did you go beyond the notebook? Does the project reflect awareness of business impact?

Pick one project and start this week

Three to five strong projects built end-to-end will serve you far better than twenty half-finished ones. Pick the project on this list that matches your current level and genuinely interests you, open a Jupyter notebook, find the dataset, and start exploring the data before you write a single line of model code.

Real ML work is messy and iterative. You will bounce between data cleaning, feature engineering, and model tuning many times over, both while learning and on the job.

Frequently asked questions

How many machine learning projects do I need for a portfolio?

Three to five well-executed, end-to-end projects is the sweet spot.

Quality beats quantity. One passion-driven original project is worth more than ten tutorial clones.

Hiring managers care about whether you can identify a real problem and solve it, not how long your project list is.

What is the best programming language for machine learning projects?

Python, overwhelmingly.

The ML ecosystem (scikit-learn, PyTorch, TensorFlow, Hugging Face) is built around Python, and it is the language used in virtually every production ML role.

R is a strong alternative for statistical modeling and research contexts, but for most applied ML portfolios, Python is the default choice.

Can I get a machine learning job with just projects and no degree?

Yes, but go in with realistic expectations.

Entry-level ML roles are competitive. Strong Python, SQL fluency, solid statistics fundamentals, and demonstrated project work are necessary, but may not be sufficient on their own.

Pair strong projects with active networking, targeted applications, and domain knowledge in the industry you’re targeting.

Projects open doors. Relationships and context get you through them.

What is the difference between a data science project and a machine learning project?

Machine learning projects center on building predictive models: classification, regression, clustering, or generation.

Data science projects may include exploratory analysis, visualization, and business storytelling without a predictive component.

Most strong portfolio projects include elements of both: you explore the data, build a model, and explain what it means in terms someone without an ML background can act on.

How long does it take to complete a machine learning project?

Beginner projects typically take 4–15 hours.

Intermediate projects run 15–40 hours.

Advanced or original projects with custom data can easily take 40–100+ hours.

These estimates assume you are learning along the way, not just executing familiar steps. Budget extra time for data cleaning.

Is machine learning a good career in 2026?

Demand is high and growing, but entry-level ML roles are genuinely competitive, more so than analyst or data engineering roles at the same experience level.

Companies increasingly expect candidates to do more than train models. Deployment awareness, debugging production issues, and communicating results to non-technical stakeholders are part of the job.

Projects that demonstrate these broader skills stand out significantly.

Can I do machine learning projects without a GPU?

Yes, for most beginner and intermediate projects.

Classical machine learning like regression, classification, and clustering runs comfortably on a laptop CPU.

For deep learning or transformer fine-tuning, Google Colab provides free GPU access directly in your browser.

How do I present ML projects so employers notice them?

A strong GitHub README makes the difference.

Include:

- The problem and why it matters

- Your approach and why you chose it

- Results with honest metrics

- What you would improve next

On your resume, use a format like: "Built [project type] using [tools] that achieved [measurable result]."

Employers evaluate your decision-making and communication, not just your code.

from Dataquest https://ift.tt/bOp6gWH

via RiYo Analytics

No comments