https://ift.tt/UXGF0Pw The necessity of World Model, Theory of Mind, Continual Learning, and Late-Binding context Image credit: James Th...

The necessity of World Model, Theory of Mind, Continual Learning, and Late-Binding context

AI research can be a humbling experience — some even claim that it remains at a relative standstill when it comes to replicating the most fundamental aspects of human intelligence. It can correct spelling, make financial investments, and even compose music; but it cannot alert someone to the fact that they have their t-shirt on inside out without being explicitly ”taught” to do so. More to the point, it cannot discern why this might be useful information to have. And yet a five-year-old, just beginning to dress herself, will notice and point out the white tag at the base of her father’s neck.

Most researchers in the AI field are well aware that amid the numerous advances in deep neural networks, the gap between human intelligence and AI has not significantly narrowed, and solutions allowing for computationally efficient adaptive reasoning and decision-making in complex real-life environments remain elusive. Cognitive AI, that is intelligence that would allow machines to comprehend, learn and perform intellectual tasks similarly to humans, remains as elusive as ever. In this blog post, we will explore why this chasm exists and where AI research must go if we are to have any hope of crossing it.

Why ‘Mr. AI’ Can’t Hold a Job

How great would it be to work side-by-side with an AI assistant that could shadow us throughout the day, picking up our slack? Imagine how wonderful it would be if the algorithms could truly unburden us humans from the “drudge” tasks of our workday so we could focus on the more strategic and/or creative aspects of our jobs? The problem is, with a partial exception for systems like GitHub Copilot, a fictional ‘Mr. AI’ based on current state-of-the-art would likely receive its pink slip before the workday’s end.

For starters, Mr. AI is painfully forgetful, particularly when it comes to contextual memory. It also suffers from a crippling lack of attention. To some, this may seem surprising given the extraordinary large language models (LLM) of today, including LaMDA and GPT-3, which in some situations appear like they could well be conscious. However, even with the most advanced state-of-the-art deep learning models, Mr. AI’s work performance will invariably fall short of expectations. It doesn’t adapt well to changing environments and demands. It cannot independently ascertain that advice it provides is epistemologically and factually sound. It cannot even come up with a simple plan! And no matter how carefully engineered its social skills are, it’s bound to stumble in a highly dynamic world with complex social and ethical norms. It simply doesn’t have what it takes to thrive in a human world.

But what is that?

Four Key Properties of Advanced Intelligence

To impart more human-like intelligence to machines, one must first explore what makes human intelligence distinct from many current (circa 2022) neural networks typically used in AI applications. One way of drawing such a distinction is through the following four properties:

1. World Model

Humans naturally develop a “world model” that allows them to envision an endless number of short- and long-term “what if” scenarios that inform their decision-making and actions. AI models could become vastly more efficient with a similar capability, one that would allow them to simulate potential scenarios from beginning to end in a resource-efficient manner. An intelligent mechanism needs to model a complex environment with multiple interacting individual agents. An input-to-output mapping function (such as can be achieved with a complex feed-forward network), needs to “unroll” all the potential paths and interactions. The complexity of such an input-to-output unrolled model in a real-life environment would quickly explode in complexity, especially when accounting for tracking arbitrary duration of relevant history for each of the agents. In contrast, an intelligent mechanism that can model each of the factors and agents independently in a simulation environment, can evaluate numerous what-if future scenarios and grow the model by replicating copies of the actors, each with their knowable relevant history and behavior.

Key to acquiring a world model with such capacity for simulation is the decoupling between the construction of the building blocks of the world model (an epistemic reasoning process) and their subsequent use in simulation of possible outcomes. The resulting “what-if” scenarios could then be compared in a way that remains consistent even if the simulation methodology changes over time. A special case example of such approach can be found in Digital Twins, where the machine is equipped (through self-learning or explicit design) with a model of its environment and can simulate the potential futures of various interactions.

Intelligent beings and machines use models of the world (‘World Views’) to make sense of observations and assess potential futures to select the best course of action. In transitioning from a ‘generic’ large-scale setting (like replying to web queries) to direct interaction in a particular setting that includes multiple actors, the world model must be effectively scaled and customizable. A decoupled, modular, customizable approach is a logically different and far less complex architecture than one that attempts to simulate and reason all in a single “input-output function” step.

2. Theory of Mind

“Theory of mind” refers to a complex mental skill that has been defined by cognitive science as a capacity to understand and predict the actions and beliefs of another person by tracking that person’s attention and ascribing a mental state to them.

In the simplest of terms, it is what we do when we attempt to read another person’s mind. We develop and use this skill throughout our lives to help us navigate our social interactions. It’s why we don’t remind our dieting co-worker of the giant plate of fresh chocolate chip cookies in the breakroom.

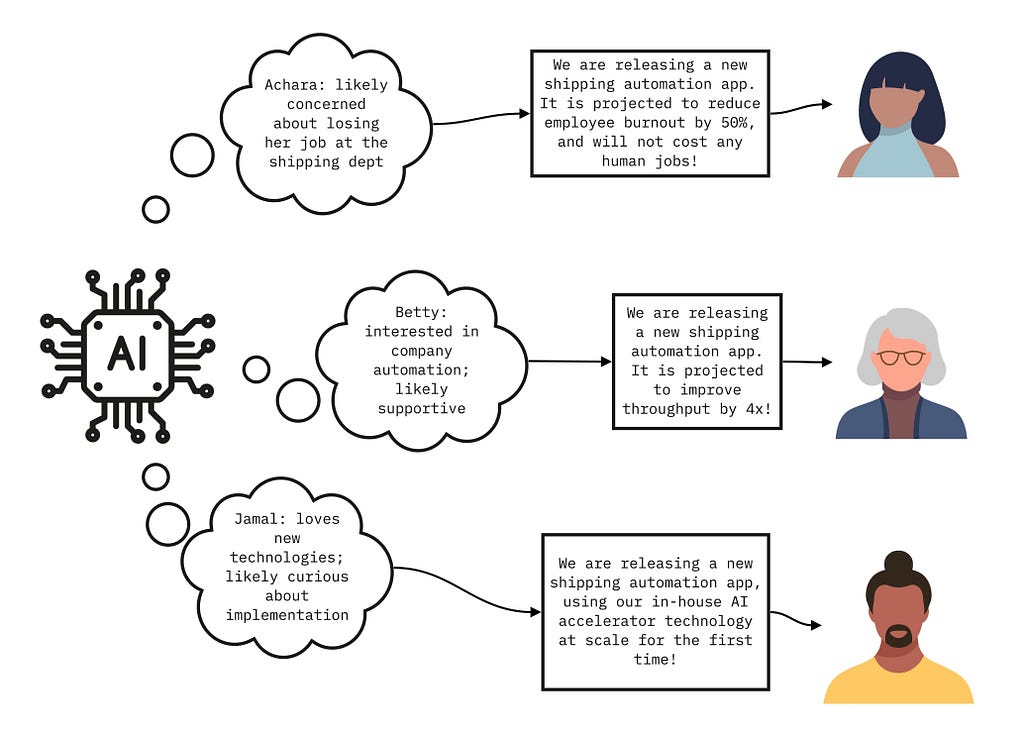

We see traces of the theory of mind being used in AI applications such as chatbots that are uniquely attuned to the emotions of the customer they are serving, based on the reason they opened the chat, the language they are using, etc. However, performance metrics used to train such social chatbots — typically defined as conversation-turns per session, or CPS — merely train the model to maximize the attention from the human, and do not force the system to develop an explicit model of the human’s mind as measured by reasoning and planning tasks.

In a system that needs to interact with a particular set of individuals, theory of mind would require a more structured representation that is amenable to logical reasoning operations such as those employed in deductive and inductive reasoning, planning, inference of intent, and so on. Moreover, such a model would have to keep track of the varied behavioral profiles, be predictably updateable with the influx of new information and avoid relapsing into a previous model state.

3. Continual learning

With some exceptions, the standard machine learning paradigm of today is batched, offline learning, potentially followed by fine-tuning to the specific task. The resulting models are therefore unable to extract useful long-term updates from the information they are being exposed to while deployed. Humans have no such limitation. They learn continuously and use this information to build cognitive models like world views and theories of mind. In fact, continual learning is what enables humans to maintain and update their mental models.

The problem of continual learning (also dubbed lifelong and continuous learning) is now garnering much stronger interest in the AI research community, in part due to the practical demands brought by the advent of technologies like federated learning and workflows like medical data processing. An AI system that employs a world model of an environment it operates in, with theories of mind associated with the various agents in that environment, a continual learning capability would be critical to maintain and update historical and current state descriptors for each object and agent.

While the industry’s need is very clear, much remains to be done. Specifically, solutions that enable continual learning of information to then be used for reasoning or planning are still in their nascency — and such solutions would be required to enable the model-building capabilities above.

4. Late binding context

Late binding context refers to the combination of contextually specific (rather than generic) responses and utilizes the latest relevant information available at the time of query or decision. Contextual awareness embodies all the subtle nuances of human learning — it is the ‘who’, ‘why’, ‘when’ and ‘what’ that inform human decisions and behavior. It keeps humans from resorting to reasoning shortcuts and jumping to imprecise, generalized conclusions. Rather, contextual awareness allows us to build an adaptive set of behaviors tailored to the specific state of environment that needs to be addressed. Without this capability, our decision-making would be greatly impaired. Late binding context is also closely intertwined with continuous learning. For more information on late binding context please see the previous blog, Advancing Machine Intelligence: Why Context Is Everything.

Human Cognition as a Roadmap to the Future of AI

Without the key cognitive capabilities listed above, many of the critical needs of human industry and society will not be met. There is therefore a fairly urgent need to apportion more research to the translation of human cognitive capabilities into AI functionality — in particular those properties that make it unique from current AI models. The four properties listed above are a starting point. They lay at the center of a complex web of human cognitive function and provide a path towards computationally efficient adaptive models that can be deployed in real-life multi-actor environments. As AI proliferates from centralized, homogenized large models to the multitude of uses that are integrated within socially complex settings, the next set of properties will need to emerge.

References

- Mitchell, M. (2021). Why AI is harder than we think. arXiv preprint arXiv:2104.12871. https://arxiv.org/abs/2104.12871

- Marcus, G. (2022, July 19). Deep Learning Is Hitting a Wall. Nautilus | Science Connected. https://nautil.us/deep-learning-is-hitting-a-wall-14467/

- Singer, G. (2022, January 7). The Rise of Cognitive AI — Towards Data Science. Medium. https://towardsdatascience.com/the-rise-of-cognitive-ai-a29d2b724ccc

- Ziegler, A., Kalliamvakou, E., Li, X. A., Rice, A., Rifkin, D., Simister, S., … & Aftandilian, E. (2022, June). Productivity assessment of neural code completion. In Proceedings of the 6th ACM SIGPLAN International Symposium on Machine Programming (pp. 21–29). https://dl.acm.org/doi/pdf/10.1145/3520312.3534864

- Malone, T. W., Rus, D., & Laubacher, R. (2020). Artificial intelligence and the future of work. A report prepared by MIT Task Force on the work of the future, Research Brief, 17, 1–39. https://workofthefuture.mit.edu/research-post/artificial-intelligence-and-the-future-of-work/

- Thoppilan, R., De Freitas, D., Hall, J., Shazeer, N., Kulshreshtha, A., Cheng, H. T., … & Le, Q. (2022). Lamda: Language models for dialog applications. arXiv preprint arXiv:2201.08239. https://arxiv.org/pdf/2201.08239.pdf

- Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J. D., Dhariwal, P., … & Amodei, D. (2020). Language models are few-shot learners. Advances in neural information processing systems, 33, 1877–1901. https://arxiv.org/abs/2005.14165

- Curtis, B., & Savulescu, J. (2022, June 15). Is Google’s LaMDA conscious? A philosopher’s view. The Conversation. https://theconversation.com/is-googles-lamda-conscious-a-philosophers-view-184987

- Dickson, B. (2022, July 24). Large language models can’t plan, even if they write fancy essays. TechTalks. https://bdtechtalks.com/2022/07/25/large-language-models-cant-plan/

- Mehrabi, N., Morstatter, F., Saxena, N., Lerman, K., & Galstyan, A. (2021). A survey on bias and fairness in machine learning. ACM Computing Surveys (CSUR), 54(6), 1–35. https://dl.acm.org/doi/abs/10.1145/3457607

- LeCun, Y. (2022). A Path Towards Autonomous Machine Intelligence Version 0.9. 2, 2022–06–27. https://openreview.net/pdf?id=BZ5a1r-kVsf

- El Saddik, A. (2018). Digital twins: The convergence of multimedia technologies. IEEE multimedia, 25(2), 87–92. https://ieeexplore.ieee.org/abstract/document/8424832

- Frith, C., & Frith, U. (2005). Theory of mind. Current biology, 15(17), R644-R645. https://www.cell.com/current-biology/pdf/S0960-9822(05)00960-7.pdf

- Apperly, I. A., & Butterfill, S. A. (2009). Do humans have two systems to track beliefs and belief-like states?. Psychological review, 116(4), 953. https://psycnet.apa.org/doiLanding?doi=10.1037%2Fa0016923

- Baron-Cohen, S. (1991). Precursors to a theory of mind: Understanding attention in others. Natural theories of mind: Evolution, development and simulation of everyday mindreading, 1, 233–251.

- Wikipedia contributors. (2022, August 14). Theory of mind. Wikipedia. https://en.wikipedia.org/wiki/Theory_of_mind

- Shum, H. Y., He, X. D., & Li, D. (2018). From Eliza to XiaoIce: challenges and opportunities with social chatbots. Frontiers of Information Technology & Electronic Engineering, 19(1), 10–26. https://link.springer.com/article/10.1631/FITEE.1700826

- Zhou, L., Gao, J., Li, D., & Shum, H. Y. (2020). The design and implementation of xiaoice, an empathetic social chatbot. Computational Linguistics, 46(1), 53–93. https://direct.mit.edu/coli/article/46/1/53/93380/The-Design-and-Implementation-of-XiaoIce-an

- Reina, G. A., Gruzdev, A., Foley, P., Perepelkina, O., Sharma, M., Davidyuk, I., … & Bakas, S. (2021). OpenFL: An open-source framework for Federated Learning. arXiv preprint arXiv:2105.06413. https://arxiv.org/abs/2105.06413

- Vokinger, K. N., Feuerriegel, S., & Kesselheim, A. S. (2021). Continual learning in medical devices: FDA’s action plan and beyond. The Lancet Digital Health, 3(6), e337-e338. https://www.thelancet.com/journals/landig/article/PIIS2589-7500(21)00076-5/fulltext

- Singer, G. (2022b, May 14). Advancing Machine Intelligence: Why Context Is Everything. Medium. https://towardsdatascience.com/advancing-machine-intelligence-why-context-is-everything-4bde90fb2d79

Beyond Input-Output Reasoning: Four Key Properties of Cognitive AI was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

from Towards Data Science - Medium https://ift.tt/6Lmi0X8

via RiYo Analytics

ليست هناك تعليقات